if you happen to have more than one internet connection and they have different usable bandwidths - which is no longer a rarity today - it becomes interesting element in network design. how would you use these links optimally?

i have to admit, that i was provoked to sit down and write down this series of post by Marcin Ślęczek post on ccie.pl forum. Marcin is CEO of networkers.pl but by heart, he’s network engineer and sometimes fights with interesting problems. although I already had in my head something like solution to the problem I was struggling with in my home network, the inability to solve Marcin’s problem immediately provoked me to describe the problem and potential solutions from the inside out.

of course, as we live in an times of SDN “solutions”, and therefore everything has to have label of “software-defined” or doesn’t count, in most cases solution to such problem would be “get yourself a SDWAN appliance”. this post is not about marketing however, and focuses on good old layer three (with a bit of layer four as well).

This could be a separate topic for many digressions - most of the so-called “SDWAN solutions” on the market today are simply linux distribution patched with some scripts and GUI (often from some open source library). The vast majority of these solutions do not scale in practice (well, sure - everything scales on the slides but…), they do not have a uniform API and the way of monitoring key parameters to - for example - decide on changing the active link is very… lax. Moreover, they don’t work in isolation from the same “solutions” on the other end of the connection (which usually you don’t have because you have only one router), so in general they are just useless for the purposes of this article. I’ll omit them with eloquent silence as unworthy of a network engineer’s deliberation, but perhaps we will come back to their topic later.

so let’s look at the issue from scratch. for the time being we’ll focus only on the features available on standard routers, without unnecessary “software disturbances”.

before we go forward - i’ll be using following four prefixes from IANA reserved networks inventory described in RFC2544 and in RFC5737 to avoid network conflicts:

- 192.0.2.0/24

- 198.18.0.0/15

- 198.51.100.0/24

- 203.0.113.0/24

we’ll treat them as “public” IPs

long, long ago

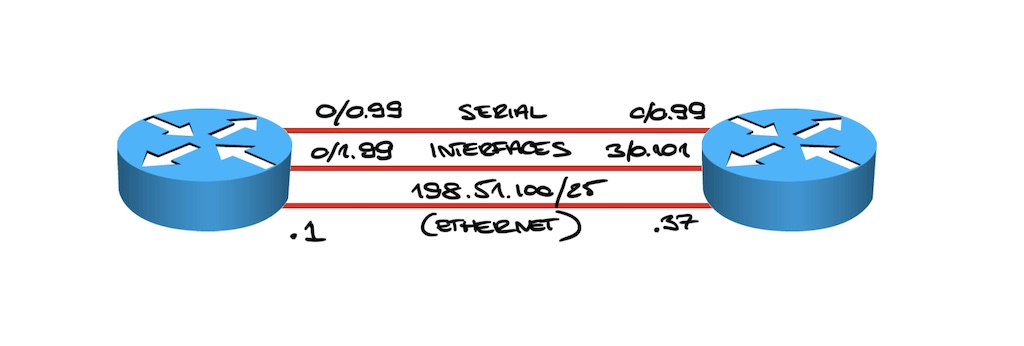

on Cisco routers you could load balance traffic “since forever” using multiple routing definitions with different next-hop IPs or interfaces. so, if you had following simplistic network:

you could just define two routes, configuring them over different interfaces (for point-to-point ones) or different next-hop IPs:

ip route 0.0.0.0 0.0.0.0 Serial0/0/0.99

ip route 0.0.0.0 0.0.0.0 Serial0/0/1.99

if you happened to have three - define three routes:

ip route 0.0.0.0 0.0.0.0 Serial0/0/0.99

ip route 0.0.0.0 0.0.0.0 Serial0/0/1.99

ip route 0.0.0.0 0.0.0.0 198.51.100.37

as you can see, during configuration we provide interfaces/routes mixing interface names and IP addresses. on interfaces working as point-to-point, you can simply point the route to the name of the interface without any problems, because it is known that by definition the recipient of the packet is the other side. there’s also a well-known L2 header rewrite info. in the case of broadcast interfaces however, such definition would cause an ARP query for the gateway address each time there’s traffic to some new IP. needless to say, this affects performance. it’s one of the most common mistakes made by novice network engineers

… and so on for up to 32 or 64 parallel routes (depending on the platform). for “home” needs that should be more than enough

Cisco Express Forwarding, or CEF is a solution allowing you to build high-performance structures for transferring traffic both for pure software solutions (older routers, ranging from AGS to the Cisco ISR 1900/2900/3900 series, and now Cisco CSR 1000v/8000v or IOS-XRv9000), mixed software/hardware routers (old Cisco 7200 routers with NSE-1 processor, 7300 with NSE-1 and NSE-100 cards, GSR 12000 routers, 7600 routers) and built using dedicated hardware architecture (like current Cisco ASR 9000, CRS-1/3 or finally Cisco 8000, another fastest and most scalable router in the world). CEF uses a sophisticated process to create an optimized FIB table and save time during traffic processing.

there’s a completely different achievement, which we as Cisco can also boast about. we’ve built forwarding stack that allows for routing or switching traffic with services with unmatched performance - Vector Packet Processing. we donated the code to the Linux Foundation and it is currently in use in the OpenStack project and some other solutions. it beats other similar stacks and has been under development since the early 2000’s. today it drives both Cisco CSR 1000v/8000v and XRv9000 routers

in order to select the output interface for each packet as quickly and as possible with the same (predictable) delay (and perform additional operations, such as, for example, impose new L2 information on the packet - see the CEF description above), CEF uses a hash table (hash). in this case, table stores pointers to egress interfaces, thanks to which searching in the information tree can be performed very quickly and without collisions (a collision means that more than one entry is under one reference in the memory and traffic handling will be delayed by an additional cycle or cycles to check multiple items - which already means a variable delay). CEF was designed from the beginning to support many thousands (1984!) of output interfaces (and prefixes), but today it can scale to millions of output interfaces and hundreds of millions of prefixes.

which doesn’t mean any Cisco router can scale to that size today - it just means we can build something like that and CEF will do fine :)

it won’t hurt to have many interfaces

using the same principle as for generating forwarding tree information (to build FIB), when we have many parallel output interfaces, CEF uses said hash tables. this time they’re applied to the contents of the packet (actually, headers of the packet) to select the per-session output interface in a predictable manner. this is because, for example, TCP sessions don’t like being spread over links with different latencies.

load balancing single TCP session over multiple links in “per packet” model seems like a great idea, until you really try to do that. you can find more information about possible problems here

one other interesting tidbit is that actual forwarding architectures build with disregard to requirement of keeping single flow packets in order failed miserably in practice. one such example was Juniper M160 that had four parallel 2.5Gbps forwarding engines to enable 10Gbps interfaces. same problems were visible with early PC FPGA-based NIC cards and those that use multiple ARM cores to forward traffic. there’s even short whitepaper written by our very own, Polish scientists working for Poznań Supercomputing and Network Center that, by the way, used Juniper equipment.

what’s definition of ‘per session’? well, it started with easy calculation and become more and more complicated as the possibilities have expanded significantly in recent years:

- first implementations of traffic load sharing across multiple interfaces allowed it to be done per packet; it was quickly abandoned, although for the slow but multiplied lines in the 1980s, such a solution could be considered acceptable and giving real benefits (it was easier and cheaper to set up another 64kbps link than to ask for one larger 1Mbps link); incidentally, the CEF did not support this mode at the beginning - support appeared a little later, shortly before this mode of operation was withdrawn altogether;

- simple round-robin - hash consists of data contained in the source and destination address for IPv4 or IPv6 header (the simplest model that allows you to distribute traffic between gateways fairly well taking into account many source-target pairs)

- default CEF mode - uses not only the addresses but also the ports from the header to calculate the hash; today you can additionally choose whether they should be only source, only target, or both source and target ports (again, you can mix things up a bit here - if you are experimenting here, check if all applications will behave properly with this configuration - some sadly don’t, and for example you may be forced to reauthenticate each time you refresh a bank page for example)

- CEF mode with polarization protection - it’s enabled today for all modes, while it used to be (in the ’90s) a separate mode of operation; what is this “polarization” thing? in larger networks where there are many parallel interfaces between routers, they will each compute the hash for the same packet in the same way; it may cause traffic overload on some interfaces even though the remaining links will be available and unused or lightly loaded; the modification is that the CEF generates an additional value (salt) with each router startup (it can also be done manually) that’s then additionally superimposed on the result of the original hash;

- CEF mode with tunnel traffic inspection - that’s another nice modification to avoid traffic polarization for encapsulated traffic - like GRE, IPinIP or VXLAN; tunneled traffic between pair of IPs may for example contain inside many thousands of unique sessions; depending on the architecture and hardware capabilities of your router, this option may or may not be available

- CEF mode with DPI function - DPI meaning Deep Packet Inspection - some of the edge access Cisco routers can use it’s additional features to detect more advanced header protocols - like GRE, L2TP, IPsec, IPinIP, VXLAN or L2VPN

- CEF mode with GTP header inspection - it’s dedicated mode for mobile (3G/4G) operators; our knowledge of GTP protocol (that’s using UDP encapsulation) is used here to provide optimal per-subscriber load sharing

ok, so I have four interfaces, but they’re different

yeah. we started the discussion with two or three generic interfaces, and typically if you’re dealing with “home” router situation, all interfaces will provide different bandwidth characteristics and may be of a different type. so, for this example, let’s assume I have:

- “business” link, 1Gbps “down” and 1Gbps “up” (ISP “A”)

- “home” link, based on GPON - 1Gbps “down” and 300Mbps “up” (ISP “B”)

- another “home” link, also GPON based, 150Mbps “down” and 60Mbps “up” (ISP “C”)

- and at the end - LTE link; for now (we’ll get to links changing their characteristics in dynamic way in future parts of the series) let’s assume it offers fairy stable 50Mbps “down” and 40Mbps “up” (ISP “D”)

so… that should be easy. with four interfaces on our router, let’s simply define four default routes:

ip route 0.0.0.0 0.0.0.0 GigabitEthernet 0/0/0 198.51.100.37 ! ISP A

ip route 0.0.0.0 0.0.0.0 GigabitEthernet 0/0/1 203.0.113.22 ! ISP B

ip route 0.0.0.0 0.0.0.0 GigabitEthernet 0/0/2 198.18.0.6 ! ISP C

ip route 0.0.0.0 0.0.0.0 Dialer1 ! ISP D

of course, if ISPs provide you native IPv6, configuration will be the same. if you however need to use IPv6inIP tunnels… it will be still the same:

ipv6 route ::/0 Tunnel101 ! tunnel via ISP A, IPv4 destination address reachable over

! ISP A link

ipv6 route ::/0 Tunnel102 ! tunnel via ISP B, IPv4 destination address reachable over

! ISP B link

it will of course work correctly, but each geek will immediately notice, that we’re load-balancing the traffic more or less equally - and each of those links offers different amount of bandwidth:

rtr-edge#sh ip route

[...]

S* 0.0.0.0/0 [1/0] via 198.51.100.37, GigabitEthernet0/0/0 ! 1000Mbps "available"

[1/0] via 203.0.113.22, GigabitEthernet0/0/1 ! 300Mbps "available"

[1/0] via 198.18.0.6, GigabitEthernet0/0/2 ! 60Mbps "available"

is directly connected, Dialer1 ! 40Mbps "available"

quick & dirty trick with non-recursive routing

first trick I’m going to share with you, that was available in the past, was to add additional static routes via intermediate next-hop to make up for the bandwidth differences.

we have following Mbps values:

1000 : 300 : 60 : 40

if you divide all those by lowest common denominator - which is 40, you get minimum weights of:

25 : 8 : 2 : 1

so… we have to define 36 routes? no, it doesn’t make much sense. we can just easily assume, that the “business” link offering 1Gbps should provide most of the uplink speed, 300Mbps about 1/3 of the first one, 60Mbps link only 1/5 of the 300Mbps link and 40Mbps - half of what 60Mbps gets.

the way you configure those intermediate routes is to trick early CEF implementations into thinking they point to unique output interfaces.

so let’s assume we want to define 10 additional routes over this ISP A link that did originally look like this:

ip route 0.0.0.0 0.0.0.0 GigabitEthernet 0/0/0 198.51.100.37

let’s define 9 intermediate routes:

ip route 192.0.2.10 255.255.255.255 198.51.100.37

ip route 192.0.2.11 255.255.255.255 198.51.100.37

ip route 192.0.2.12 255.255.255.255 198.51.100.37

ip route 192.0.2.13 255.255.255.255 198.51.100.37

ip route 192.0.2.14 255.255.255.255 198.51.100.37

ip route 192.0.2.15 255.255.255.255 198.51.100.37

ip route 192.0.2.16 255.255.255.255 198.51.100.37

ip route 192.0.2.17 255.255.255.255 198.51.100.37

ip route 192.0.2.18 255.255.255.255 198.51.100.37

…and now let’s add 9 matching default routes, but pointing to those defined above, not the original one:

ip route 0.0.0.0 0.0.0.0 192.0.2.10

ip route 0.0.0.0 0.0.0.0 192.0.2.11

ip route 0.0.0.0 0.0.0.0 192.0.2.12

ip route 0.0.0.0 0.0.0.0 192.0.2.13

ip route 0.0.0.0 0.0.0.0 192.0.2.14

ip route 0.0.0.0 0.0.0.0 192.0.2.15

ip route 0.0.0.0 0.0.0.0 192.0.2.16

ip route 0.0.0.0 0.0.0.0 192.0.2.17

ip route 0.0.0.0 0.0.0.0 192.0.2.18

at first glance it may look absurd, CEF up to and including version 12.1 didn’t resolve next-hops over more than one indirection. it had this nice effect in that it provided to offer UCMP - Unequal Cost Multi Path capabilities out of the box.

lets add three additional routes over second interface:

rtr-edge#sh ip route

[...]

S* 0.0.0.0/0 [1/0] via 198.51.100.37, GigabitEthernet0/0/0 ! 1000Mbps "available"

[1/0] via 203.0.113.22, GigabitEthernet0/0/1 ! 300Mbps "available"

[1/0] via 198.18.0.6, GigabitEthernet0/0/2 ! 60Mbps "available"

is directly connected, Dialer1 ! 40Mbps "available"

ip route 192.0.2.20 255.255.255.255 203.0.113.22

ip route 192.0.2.21 255.255.255.255 203.0.113.22

ip route 192.0.2.22 255.255.255.255 203.0.113.22

ip route 0.0.0.0 0.0.0.0 192.0.2.20

ip route 0.0.0.0 0.0.0.0 192.0.2.21

ip route 0.0.0.0 0.0.0.0 192.0.2.22

CEF will now divide 16 buckets (or 32 or 64 - depending on your platform). first 10 will point to the gigabit link, next 4 at the 300Mbps link, and last 2 for slowest GPON and LTE link:

router#sh ip cef 0.0.0.0 0.0.0.0 internal

0.0.0.0/0, version 24, epoch 0, per-destination sharing

[...]

tmstats: external 0 packets, 0 bytes

internal 0 packets, 0 bytes

Load distribution: 0 0 0 0 0 0 0 0 0 0 1 1 1 1 2 3

while it isn’t exactly accurate yet, traffic will be distributed in 10:4:1:1 ratios.

well, definining numerous routes take some CLI work, but what’s more important - CEF was quickly modified.

as it is difficult to accept such neglect as to not solving next-hop “till you drop” before building the optimal FIB table, in newer IOS versions (including IOS-XE, NX-OS and obviously IOS XR) the mechanism has been improved. in current versions such trick will not work anymore. no matter how many such indirections we define, CEF will always solve true next-hop first and only then install it as the best route for FIB. here’s example from route with five indirect statements:

path list 7EFF6F9FA7E8, 3 locks, per-destination,

path 7EFF6FA7FFA8, share 1/1, type recursive, for IPv4

recursive via 192.0.2.1[IPv4:Default](), fib 7EFF779F6F88, 1 terminal fib, v4:Default:192.0.41.1/32

path list 7EFF6F9FA888, 3 locks, per-destination, flags 0x69 [shble, rif, rcrsv, hwcn]()

path 7EFF6FA80528, share 1/1, type recursive, for IPv4

recursive via 192.0.21.1[IPv4:Default](), fib 7EFF779F7088, 1 terminal fib, v4:Default:192.0.41.1/32

path list 7EFF6F9FAB08, 3 locks, per-destination, flags 0x69 [shble, rif, rcrsv, hwcn]()

path 7EFF6FA80268, share 1/1, type recursive, for IPv4

recursive via 192.0.31.1[IPv4:Default](), fib 7EFF779F7188, 1 terminal fib, v4:Default:192.0.41.1/32

path list 7EFF6F9FA9C8, 3 locks, per-destination, flags 0x69 [shble, rif, rcrsv, hwcn]()

path 7EFF6FA807E8, share 1/1, type recursive, for IPv4

recursive via 192.0.41.1[IPv4:Default](), fib 7EFF6F9F8E20, 1 terminal fib, v4:Default:192.0.41.1/32

path list 7EFF6F9FAD88, 9 locks, per-destination, flags 0x69 [shble, rif, rcrsv, hwcn]()

path 7EFF0C6D9620, share 1/1, type recursive, for IPv4

recursive via 10.0.2.254[IPv4:Default](), fib 7EFF0C6D8838, 1 terminal fib, v4:Default:10.0.2.254/32

path list 7EFF09E95768, 2 locks, per-destination, flags 0x49 [shble, rif, hwcn]()

path 7EFF09E95FB8, share 1/1, type adjacency prefix, for IPv4

attached to GigabitEthernet2, IP adj out of GigabitEthernet2, addr 10.0.2.254 7EFF740B1110

some other network vendors have not mastered this optimization yet, and are unable to resolve next-hop interfaces and next-hop fields this way; it doesn’t automatically mean that these solutions are worse, but indeed from the perspective of FIB table main characteristics - convergence, programming and reprogramming, CEF generally outclasses other solutions available on the market

at least one of the network equipment vendors has experimented with compressing the finished FIB table (instead of optimizing its structure); however, these experiments ended up with serious problems in production networks where not only the compression did not match the promised gains, but often during reconvergence it caused memory overflows, software crashes and, worst of all, “silent” rejection of traffic to random prefixes; of course it was an attempt to save on the more expensive, faster TCAMs/SRAMs that usually store FIB; I would also like to point out that traditionally these cards were tested with hundreds of thousands of prefixes but these prefixes were selected sequentially - which meant that the compression mechanism could work quite effectively during the tests, but at the same time such test was completely unrepresentative for real networks

well, can’t it be just nice and easy?

easiest solution is available today with IOS XR. XR was designed and built right from the start for ISPs, and it took a lot of existing experience and real-life use case scenarios from 15 years of running CEF in IOS.

so in IOS XR you can solve our problem by following configuration:

RP/0/0/CPU0:XR1#sh running-config router static

router static

address-family ipv4 unicast

0.0.0.0/0 198.51.100.2 metric 25 ! 25 : 8 : 2 : 1

0.0.0.0/0 203.0.113.22 metric 8 ! 25 : 8 : 2 : 1

0.0.0.0/0 198.18.0.6 metric 2 ! 25 : 8 : 2 : 1

0.0.0.0/0 Dialer1 ! you can say that such interfaces don't exist in

! IOS XR - let's just assume it's not the most

! important issue right now

!

!

once done, router will load balance the traffic according to our definition:

RP/0/0/CPU0:XR1#sh cef 0.0.0.0/0 detail

0.0.0.0/0, version 17, proxy default, internal 0x1000011 0x0 (ptr 0xa13f307c)

[...]

Weight distribution:

slot 0, weight 25, normalized_weight 25, class 0

slot 1, weight 8, normalized_weight 8, class 0

slot 2, weight 2, normalized_weight 2, class 0

slot 3, weight 1, normalized_weight 1, class 0

Hash OK Interface Address

0 Y GigabitEthernet0/0/0/0 198.51.100.37

1 Y GigabitEthernet0/0/0/0 198.51.100.37

[...]

24 Y GigabitEthernet0/0/0/0 198.51.100.37

25 Y GigabitEthernet0/0/0/1 203.0.113.22

26 Y GigabitEthernet0/0/0/1 203.0.113.22

[...]

32 Y GigabitEthernet0/0/0/1 203.0.113.22

33 Y GigabitEthernet0/0/0/2 198.18.0.6

34 Y GigabitEthernet0/0/0/2 198.18.0.6

35 Y Dialer1

OK, not everyone has ASR 9000 at home, or NCS 540/560 or NCS 5500… or some other XR enabled platform. you can obviously run XRv9000 but then you won’t have NAT and PPPoA/oE client, not to mention support for “dialer” interfaces.

so let’s look at access routers

in the next part we’ll go back to what’s available today on access routers. and then, I’ll present you my current solution for the problem.